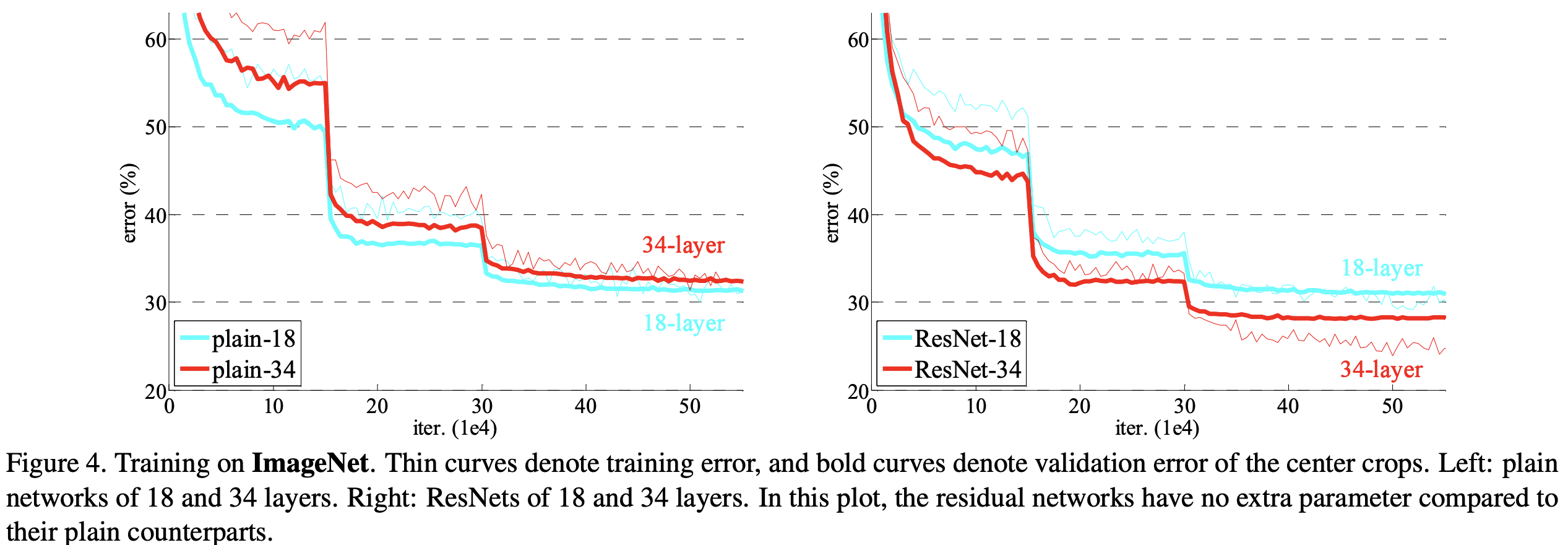

As evident from the learning curves, this is not due to overfitting$^$ which is the identity mapping. Particularly, when we start increasing the depth of a model the accuracy gets saturated, and then it degrades rapidly. Įven when a network starts converging, in deeper networks, researchers have found that there is a degradation of accuracy. This issue has been addressed through various initialization methods and through batch normalization. This problem makes it difficult for a network to converge from the start. One reason for this is the vanishing gradient problem which was studied by Sepp Hochreiter in 1991 and discussed over the years. So if we just stack more and more layers, does that help? Turns out it doesn't. VGG-Net and GoogLeNet have reduced the top-5 error rate further by having deeper networks.

As shown in the figure below, we can see that

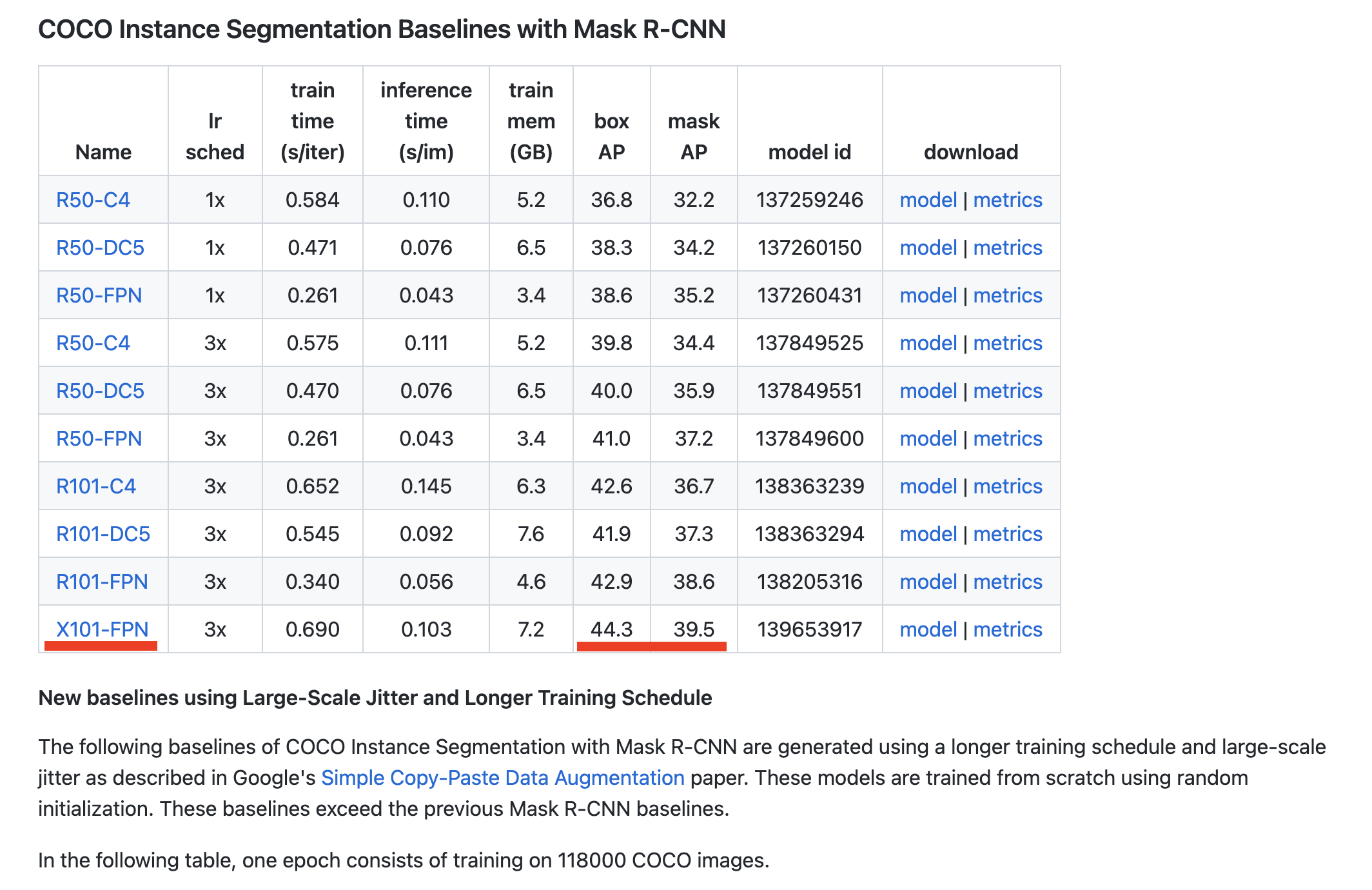

The residual networks described in this paper won the ILSVRC 2015 classification task and many other competitions. 2015), which describes a method of making convolution neural networks with a depth of up to 152 layers trainable. This is the classic ResNet or Residual Network paper (He et al. This study provides a method to recognize hundreds of butterfly species and analyzes the testing progress from the point of view of data. Deep Residual Learning for Image Recognition. By adjusting the data sets, the accuracy changes as well. We designed different data sets using the ResNet18 network to train a classifier, which achieves a validation accuracy of 86% in the end of the analysis. This testing method is a breakthrough compared to the previous work. The testing data set is new data that does not belong to the training set, which also verifies the generalizability of the model, indicating that in practical applications this model can identify new images.

The size of these images is also various. In addition, these images included not only fixed poses, but also various other images of butterflies in natural poses. The image types include standard specimen images, illustrated book scan images and camera shots. For the first time, this article integrates butterfly image data sets from multiple sources, covered illustrated books, and popular butterfly science websites. The single data set source of the existing automated identification tools is relatively simple, and the competition-based data set released only focuses on evaluating the model at present. This study focuses on the influence of image data on the recognition model. Most studies focus on fine-tuning the deep learning network or altering the algorithm to enhance the identification accuracy, and some useful tools have been generated with these methods. It helps researchers to process tremendous and various ecology data. Insect recognition is crucial for taxonomy.